Download Code

Introduction

In this work, we analyze the numerical approximation of the inverse design problem for the Burgers equation, both in the viscous and in the inviscid case:

distinguishing the viscous, $\nu >0$, and the inviscid case, $\nu=0$. Given a time $T>0$ and a target function $u^\ast$ the aim is to identify the initial datum $u_0$ so that the solution, at time $t=T$, reaches the target $u^\ast$ or gets as close as possible to it. In particular, we focus on problems with large values of $T$, for which convergence of the numerical schemes in the classical sense of numerical analysis might not suffice to obtain accurate results.

This issue is motivated by the challenging problem of sonic-boom minimization for supersonic aircrafts, which is governed by a Burgers-like equation. The travel time of the signal to the ground is larger than the time scale of the initial disturbance by orders of magnitude and this motivates our study of large time control of the sonic-boom propagation.

Description of the numerical algorithms

To implement the problem numerically, we opt for a discretization of (2) using classical conservative schemes. Let us denote spatial nodes $x_{j+1/2}={\Delta x}(j+1/2)$, $j\in\mathbb{Z}$, and time instants $t_n=n{\Delta t}$, $n\in n \cup{0}$, where ${\Delta x},{\Delta t}>0$ are the mesh size and time-step respectively. We approximate the solution $u$ of (2) by a piecewise constant function $u_\Delta$ such that

where

Here, $N=\lceil T/{ {\Delta t} }\rceil$ is the number of time-steps needed to reach $T$. We denote $g^n_{j+1/2}=g(u^n_j,u^n_{j+1})$, where the function $g$ is the numerical flux. In this paper we compare two fluxes:

In the simulations we follow the discrete approach to optimization. For the discretization of (1), we consider a simple quadrature rule:

where the target function $u^\ast$ has been discretized in the same manner as the initial data $u_0$ in (3).

Regarding the optimization technique, we use the classical gradient descent method based on the adjoint methodology. Following the discrete approach, it is easy to obtain the corresponding discrete adjoint system for (3):

To minimize (4), we will take the descent direction given by:

This direction is straightforwardly obtained following the same arguments as for the continuous level. Thus, the new initial data $u_\Delta^{0,\varepsilon}$ will be given by

for some $\varepsilon>0$ small enough.

The pseudocode for the complete algorithm can be found in Algorithm 1. Note that it is valid both for the viscous and the inviscid case of the Burgers equation.

Algorithm 1: Solve discrete optimization problem

Input:

- for $j=0$ to $M$ do

- set $u^{0,new}_j = u^0_j$

- end for

- compute $\{u^n_j\}^{n=1,...,N}_{j=0,...,M}$ from $\{u^{new,0}_j\}_{j=0,...,M}$ using (3)

- compute functional $\mathcal{J}(u_\Delta^{0,new})$ using (4)

- while stopping criteria are not met do

- for $j=0$ to $M$ do

- set $u^{0,old}_j = u^{0,new}_j$

- set $\rho^N_j = u^N_j-u^\ast_j$

- end for

- compute $\{\rho^0_j\}_{j=0,...,M}$ from $\{\rho^N_j\}_{j=0,...,M}$ and $\{u^n_j\}^{n=1,...,N}_{j=0,...,M}$ using (5)

- compute descending step-size $\varepsilon$

- for $j=0$ to $M$ do

- set $u^{0,new}_j = u^{0,old}_j-\varepsilon \rho^0_j$

- end for

- compute $\{u^n_j\}^{n=1,...,N}_{j=0,...,M}$ from $\{u^{new,0}_j\}_{j=0,...,M}$ using (3)

- compute functional $\mathcal{J}(u_\Delta^{0,new})$ using (4)

- end while

- for $j=0$ to $M$ do

- set $u^{*,0}_j = u^{0,new}_j$

- end for

Output: Optimal solution

Numerical viscosity

It is well known that (3) can be rewritten in its viscous form in the following manner:

where $R$ is uniquely defined by

In the case of the numerical fluxes that we consider in this paper, we have:

Both schemes are convergent in the classical sense of the numerical analysis. However, the large-time behavior of $u_\Delta$ depends on the degree of homogeneity of the term $R$. In other words, let us assume that there exists $\alpha\in R$ such that

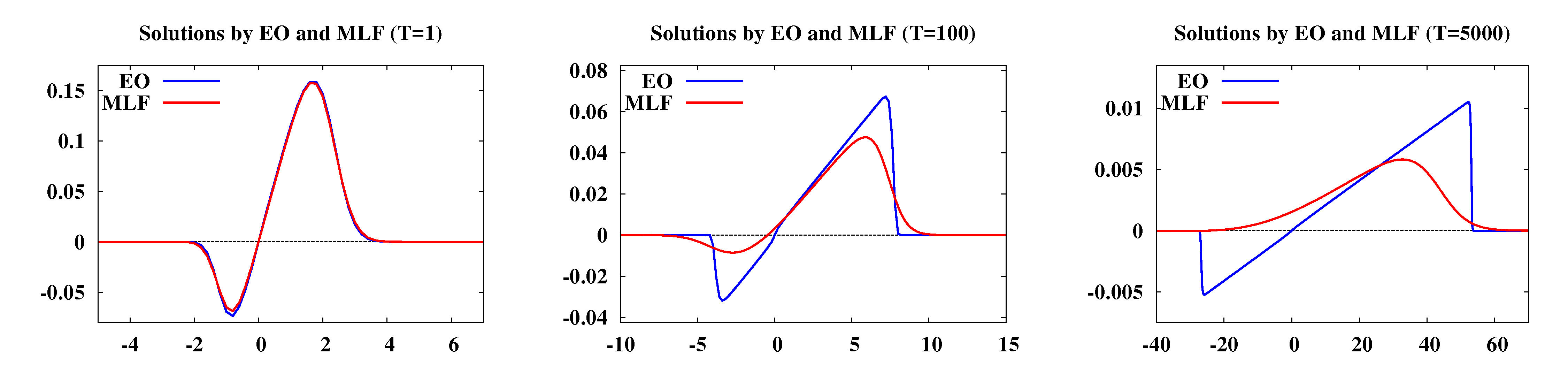

It is clear that $\alpha=2$ for Engquist-Osher and $\alpha=1$ for modified Lax-Friedrichs. Thus, the numerical viscosity inherent in MLF drives the system into a diffusive wave too early and, consequently, continuous metastable states are not reproduced numerically.

Figure 1: Solutions of (2) with $\nu=10^{-6}$ at $t=1$, $t=100$ and $t=5000$, using (3) with ${\Delta x}=0.2$, ${\Delta t}=0.5$ and numerical fluxes EO (blue) and MLF (red).

Figure 1: Solutions of (2) with $\nu=10^{-6}$ at $t=1$, $t=100$ and $t=5000$, using (3) with ${\Delta x}=0.2$, ${\Delta t}=0.5$ and numerical fluxes EO (blue) and MLF (red).

The main aim of our work is to emphasize that ignoring the dynamics of the continuous model at the numerical level can produce undesired results in optimal control problems in large time horizons. On one hand, we show that the gradient descent method performs successfully whenever the numerical flux and the mesh sizes are chosen appropriately. On the other hand, we present examples where excessive numerical viscosity dominates the physical one.